Business insiders see this as a pure development to monetizing generative AI applied sciences, making them extra extensively accessible, as an alternative of focusing on solely enterprises by very costly cloud platforms.

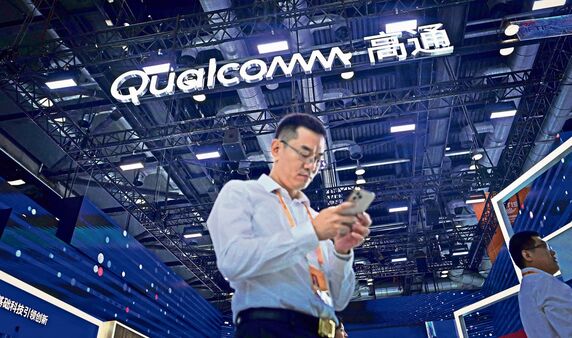

As an example, on 23 February, Qualcomm demonstrated an image-generating AI mannequin operating on a tool, with Secure Diffusion showcased on an Android smartphone. Subsequently, on 18 July, Meta and Qualcomm collectively launched Llama-2, an LLM which will likely be obtainable for real-world use by subsequent yr.

Consultants stated this method is a manner ahead to handle issues over prices, environmental affect and practicality, and would pave the best way for additional adoption of AI expertise.

Talking at a media roundtable, Ziad Asghar, senior vice-president of product administration, Qualcomm, stated: “When an AI mannequin is being educated, it takes all the data from the cloud. This coaching facet will occur on the cloud—however now, this can be very intensive to make use of it for inference. Because of this we’re making AI fashions smaller and extra compressed for operating them on-device.”

This evolution in generative AI is pure, Jaspreet Bindra, founding father of Tech Whisperer, a expertise consultancy agency, stated. “This must be the best way for our future, and we may be very assured about it. Functionality of AI transferring to the sting is already occurring, together with for offline use circumstances in numerous industries like networking base stations, with out going again to the cloud. Naturally, the evolution of generative AI, too, will occur on this case, and we’ll want particular chips for these use circumstances—which can also be the place Qualcomm enters the equation.”

In line with Qualcomm, such on-device fashions, which may run offline and not using a fixed connection to host cloud platforms, may be deployed throughout smartphones, laptops, prolonged actuality (XR) headsets and units, in addition to vehicles and industrial internet-of-thing (IoT) units.

On XR units, it might probably help in producing immersive visible fashions regionally. It could additionally tailor driving guidelines for related vehicles, outfitted with superior driver help system whereas they’re in movement by aligning real-time on highway knowledge with the pretrained directions.

Asghar didn’t point out particular names, however stated that Qualcomm is working with a number of companions, together with most manufacturers within the smartphone business. “We’re additionally working with a number of automotive manufacturers for ADAS experiences, in addition to with many prolonged actuality model companions. We’ll make extra particular bulletins at our upcoming Qualcomm Summit,” he added.

An government at a high smartphone model in India, in search of anonymity, stated groups of most smartphone manufacturers are working with Qualcomm, Microsoft, and Google, to discover growing generative AI use circumstances straight on units.

“Work on such applied sciences is at an early stage, however we’re already exploring developer implementations of purposes equivalent to photograph enhancing and emails, which make for a number of the most-used cellular purposes normally,” he added.

One use case may be throughout video convention, stated Bindra. “Throughout a distant convention name, generative AI can take heed to a sentence and exchange lacking phrases on a transcript, mitigating audio high quality points.”

“Native AI fashions may also be up to date from cloud platforms identical to it’s with app updates,” the chief added.

Another excuse for such improvement could be the environmental price of operating LLMs on cloud. “You can not have large fashions simply sitting within the cloud, and each person question will get routed to the cloud. This will likely be vital environmentally as nicely—operating cloud AI operations is an enormous environmental price,” Bindra stated.

Supply: Live Mint